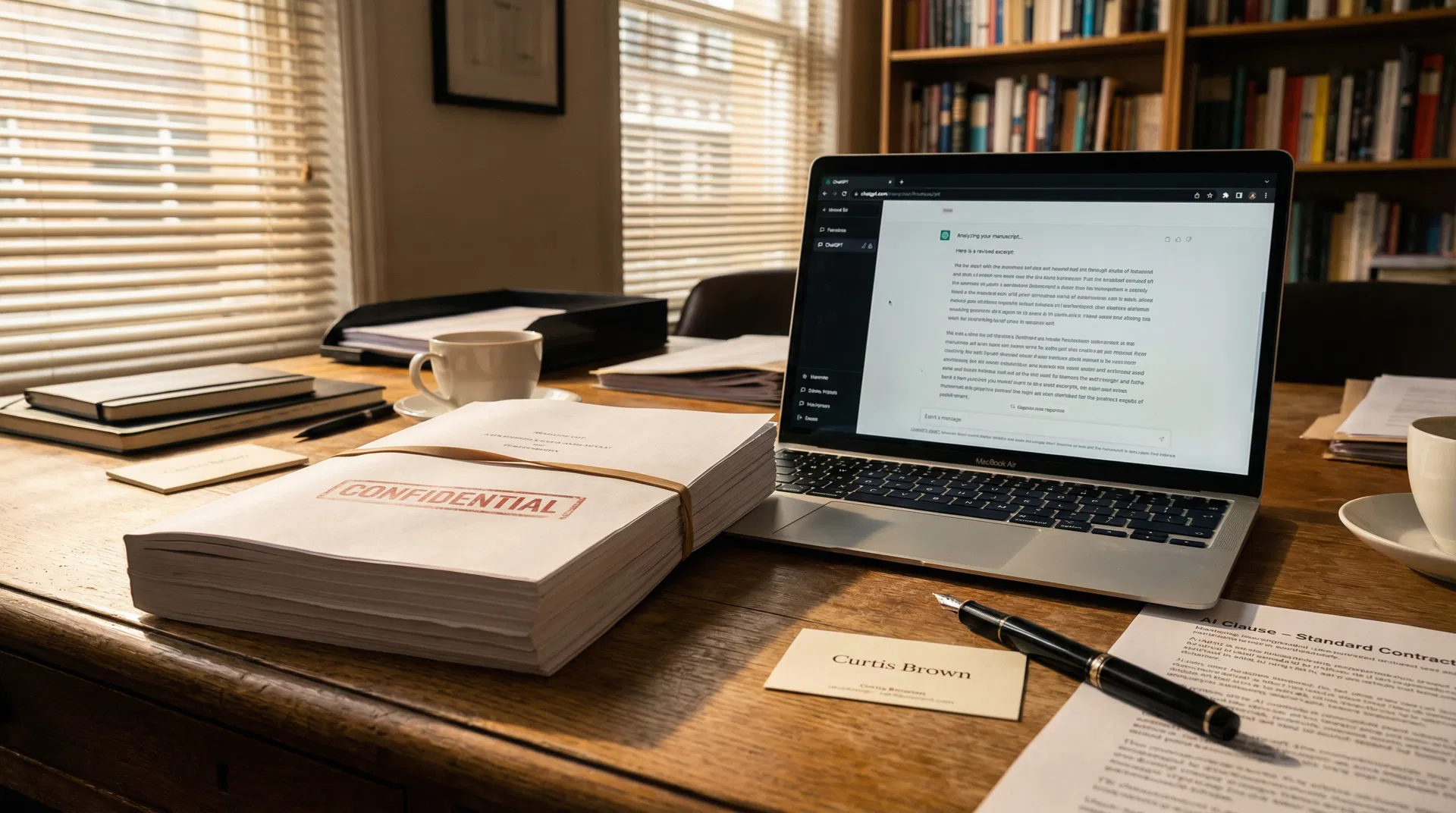

Editors Are Uploading Confidential Manuscripts to ChatGPT, Agent Claims — Agencies Respond with AI Clauses

Gordon Wise of Curtis Brown publicly alleged at LBF 2026 that some acquiring editors are uploading uncontracted manuscripts to ChatGPT to generate summaries quickly. Curtis Brown, Aitken Alexander, and PFD are introducing AI-specific clauses in submission agreements. Sam Copeland called the practice 'an absolute outrage.'

Analysis

The allegation made by Gordon Wise at the London Book Fair is, in one sense, entirely predictable. Publishing houses are under the same productivity pressures as every other knowledge industry: too many submissions, too little time, and a technology that promises to compress the reading and assessment process from days to minutes. The surprise is not that some editors may have reached for ChatGPT to help manage their inboxes — it is that the practice has become visible enough to prompt a public statement from one of the UK's most prominent literary agencies.

The legal exposure is significant and underappreciated. When an editor uploads an uncontracted manuscript to a commercial AI platform, they are transferring a third party's intellectual property to a system whose training and data retention practices are governed by the platform's own terms of service, not by any agreement with the author. OpenAI's terms permit the use of uploaded content to improve its models unless users explicitly opt out — a process that most casual users do not complete. The author whose manuscript is uploaded has not consented to this, has no visibility into what happens to their work, and has no contractual recourse against the platform. Clare Alexander of Aitken Alexander put the point directly: "taking author's words to 'teach' AI is simple theft."

The response from the agency community has been swift and structural. The introduction of AI-specific clauses into submission agreements is not merely a symbolic gesture — it creates a contractual basis for agencies to withdraw submissions from publishers who violate the terms, and potentially to pursue damages if confidential material is demonstrably misused. Will Francis of Janklow & Nesbit articulated the deeper principle at stake: "I need to be confident that the book I am reading was created by a writer having subjective experiences, by someone with an interior world." That confidence is precisely what the editorial process is supposed to provide, and it cannot be outsourced to a language model.

The broader context matters here. The Shy Girl cancellation demonstrated that AI-generated content can enter the publishing pipeline without the knowledge of the publisher. The manuscript-uploading allegation demonstrates the inverse: that AI can enter the assessment pipeline without the knowledge of the author. Both failures point to the same structural gap — the absence of clear, enforceable norms governing where AI begins and where human judgment is required. The agencies now writing those norms into their contracts are doing the work that the industry's trade associations have been slow to formalise.