As AI Discourse Rages, Publishing Has More Questions Than Answers

The cancellation of Mia Ballard's horror novel Shy Girl by Hachette's Orbit and Wildfire imprints has forced a reckoning across the publishing industry. Publishers Weekly's definitive post-mortem finds that Penguin Random House was the only Big Five publisher willing to comment publicly, while researchers and literary agents warn that AI accusations are 'incredibly difficult to prove' and that the industry's contracts and detection tools remain wholly inadequate for the challenge ahead.

Analysis

The Shy Girl affair has now produced its most important document: a measured, industry-wide post-mortem from Publishers Weekly that reveals just how unprepared the traditional publishing ecosystem is for the AI authorship question it has spent two years pretending it could defer.

The most striking finding is the silence. Of the Big Five publishers, only Penguin Random House was willing to comment publicly — and even PRH's statement was carefully limited to describing internal editorial training for "setting parameters around AI use with authors." That four of the world's largest publishers declined to engage with the most consequential authorship scandal in a generation is itself a data point. It suggests either that their internal policies are too underdeveloped to defend publicly, or that their legal teams have concluded that any statement creates liability.

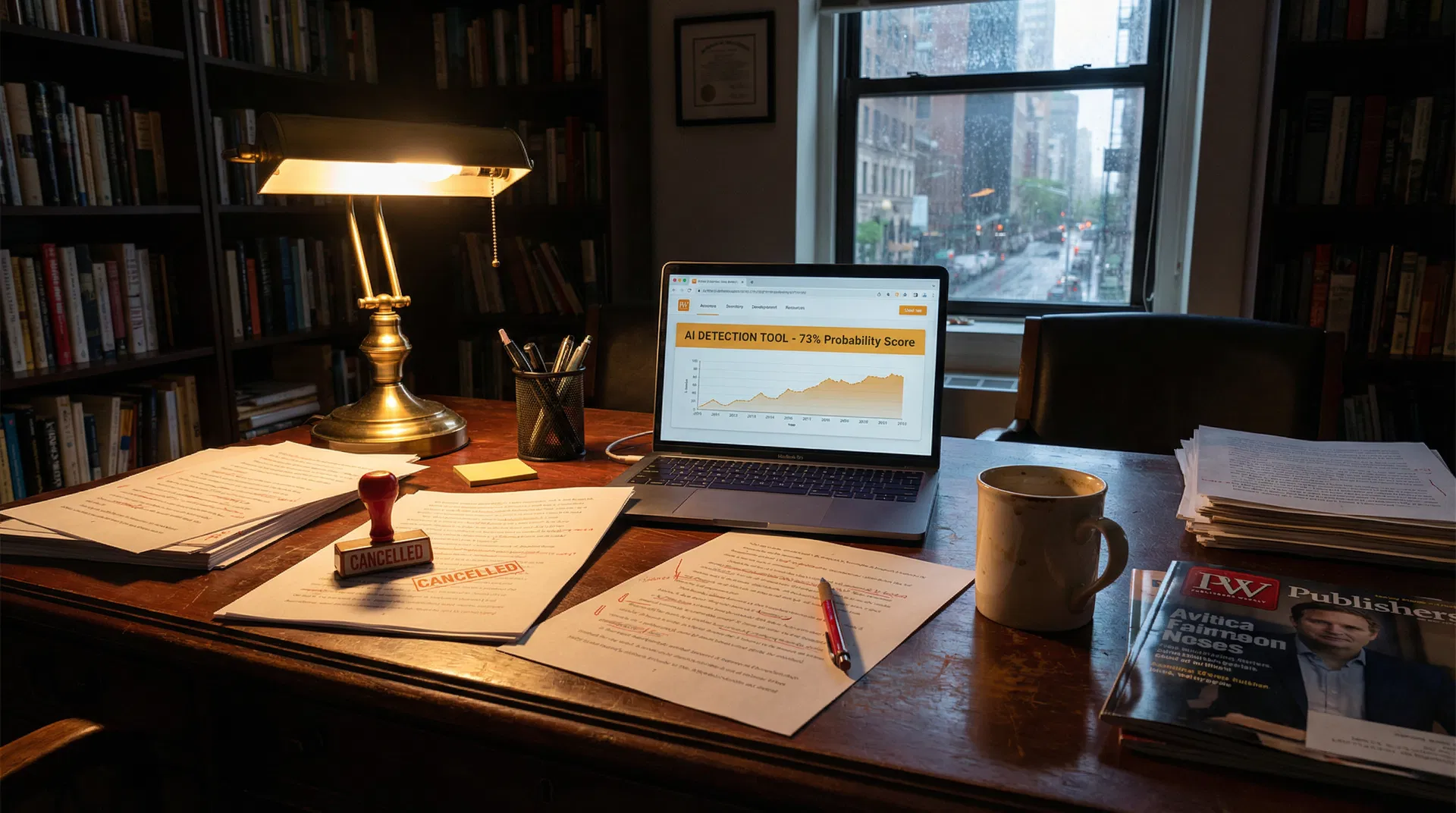

Rachel Noorda's warning deserves to be quoted in full: AI accusations are "incredibly difficult to prove" and could lead to false claims against authors who do not use the technology. This is the double bind that the industry has created for itself. By cancelling Shy Girl on the basis of AI detection tools — tools that the research community consistently rates as unreliable, with false positive rates that make them unsuitable for high-stakes decisions — publishers have established a precedent that could be weaponised against any author whose prose happens to score poorly on a probabilistic classifier. The author's own account, that a friend she hired to edit the manuscript used AI without her knowledge, is plausible and legally significant. If it is true, it raises questions about where editorial responsibility ends and authorial liability begins that no standard contract currently answers.

The agents quoted in the piece — Jennifer Weltz and Hannah Bowman — are correct that transparency and communication are the industry's greatest current peril. But transparency requires infrastructure: standardised disclosure clauses, agreed definitions of "AI use" that distinguish between generative drafting and AI-assisted grammar checking, and detection protocols that are fit for purpose. None of these exist at scale. What the industry has instead is a series of ad hoc cancellations, each one setting a slightly different precedent, with no coordinating body and no shared standard.

The Shy Girl case will not be the last. The question is whether the industry uses it as a forcing function to build the infrastructure it needs, or whether it retreats into silence and hopes the next scandal is someone else's problem.